During Neuromatch Academy 2024, our team explored mechanisms of neuroplasticity in artificial neural networks. Drawing inspiration from the ability of C. elegans to recover from neural damage, we investigated whether bio-inspired neural architectures could exhibit similar adaptive behavior when sensors are removed.

The work builds on the Neural Circuit Architectural Priors (NCAP) framework introduced by Bhattasali et al. (2022). Our central question was whether these networks can display neuroplasticity-like behavior after damage.

Background: the NCAP architecture

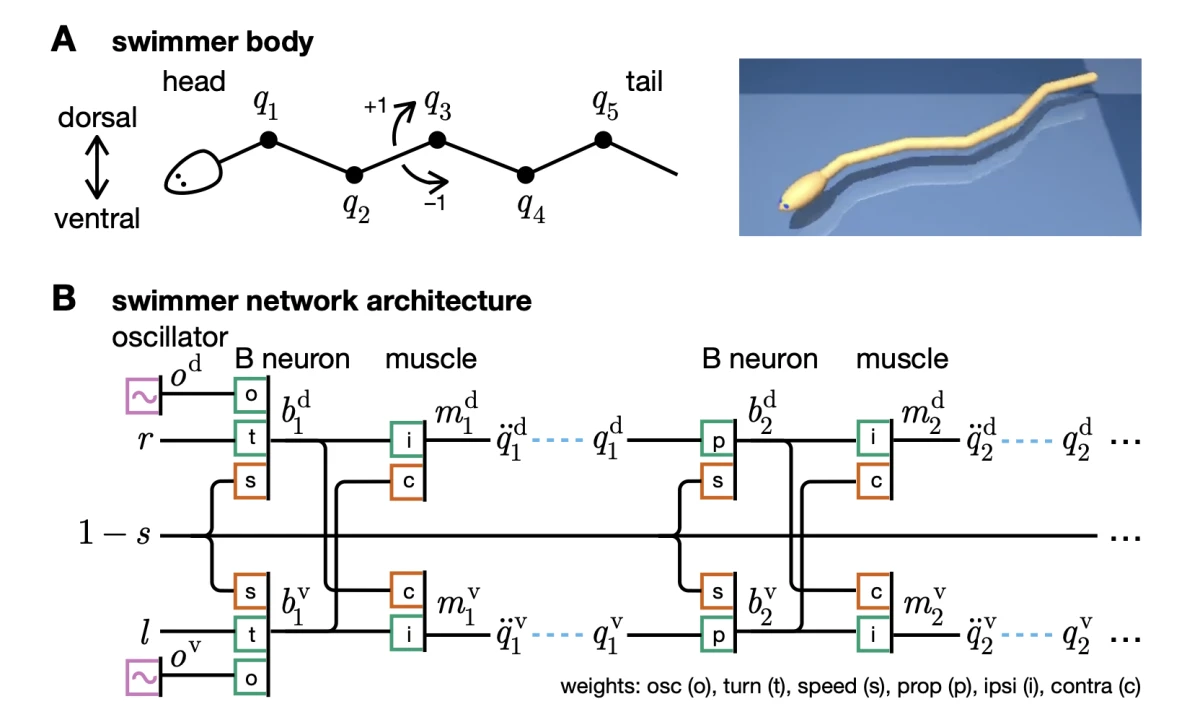

The NCAP architecture sits at the intersection of neuroscience and artificial intelligence. It translates the well-mapped neural circuits of C. elegans, one of the simplest organisms with a complete connectome, into a discrete-time artificial neural network.

The architecture uses several biologically inspired constraints: repeated microcircuits for body segments, synapses constrained as excitatory or inhibitory, specialized units such as B neurons and oscillators, and aggressive weight sharing that leaves only four trainable parameters.

Experimental design

We designed three categories of experiments to probe the network's adaptive capabilities.

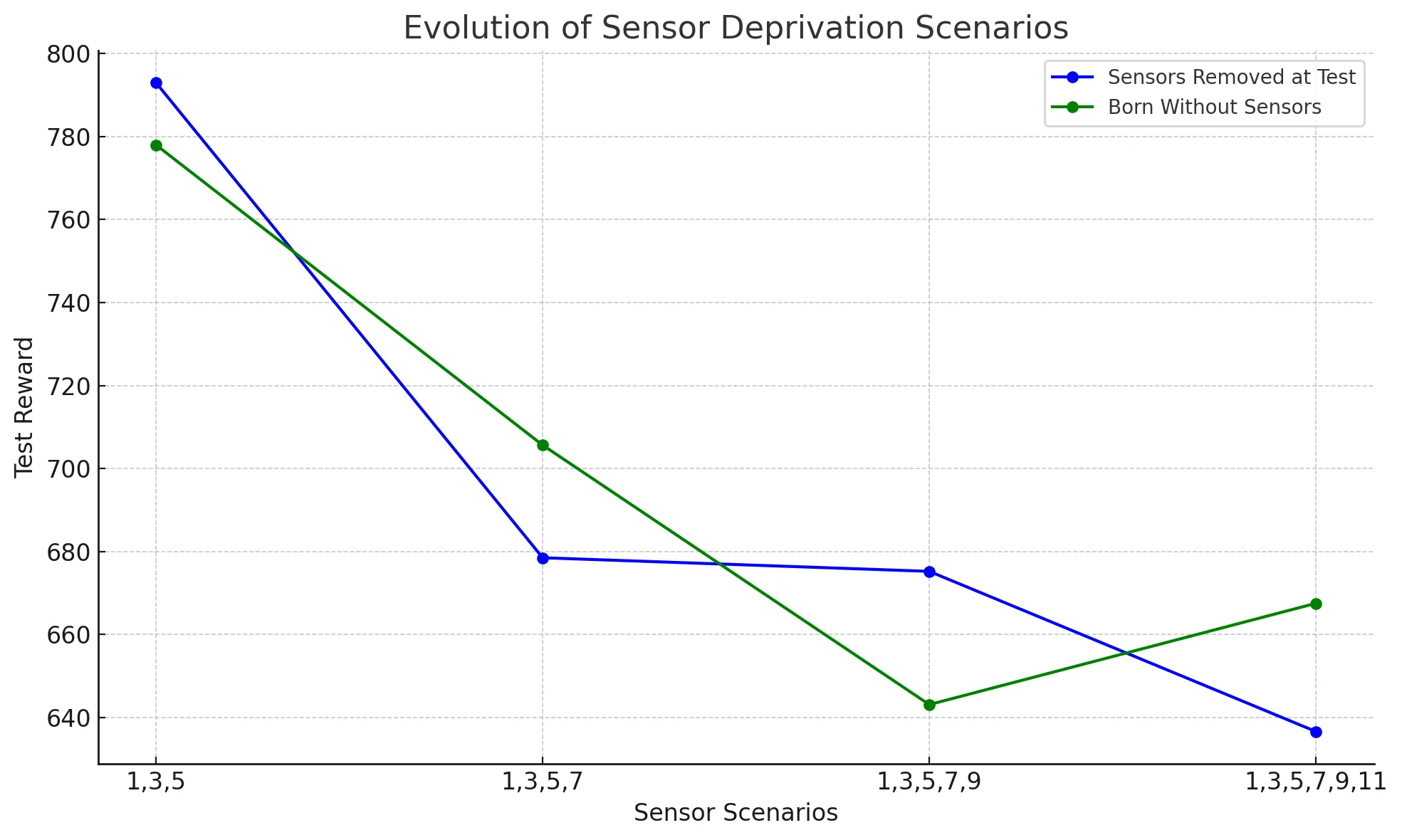

Sensor ablation patterns

- Alternating pattern: removing sensors 1, 3, 5, and so on.

- Sequential pattern: removing consecutive sensors such as 1, 2, 3.

Damage scenarios

- Born without: networks trained from scratch with specific sensors permanently disabled.

- Removed at test: fully trained networks with sensors disabled only during evaluation.

- Damage and retrain: pre-trained networks damaged and then allowed to adapt through continued training.

Key results

The experiments revealed meaningful differences between damage patterns. The alternating pattern degraded more gradually and showed signs of recovery under heavier damage, suggesting compensatory mechanisms. The sequential pattern produced a steeper performance drop and less evidence of adaptation.

Deep learning insights

Sparse connectivity as a feature

NCAP's extreme sparsity initially looks like a limitation. In these experiments, the constraint appears to help robustness. The forced modularity and local connectivity patterns create natural redundancy: when one module fails, others can partially compensate.

Reinforcement learning considerations

The retraining experiments highlight an important RL principle: behavioral adaptation through policy modification. When sensors are damaged, the optimal policy changes. NCAP's modular structure allows local policy adjustments without fully forgetting the broader locomotion behavior. We used Proximal Policy Optimization (PPO) for training because of its stability and sample efficiency in continuous-control tasks.

Implications and future directions

The findings suggest that neuroplasticity-like behavior in artificial networks may emerge not from complex learning rules alone, but from architectural constraints. This could inform more robust robotic controllers, adaptive prosthetics, and autonomous systems that must remain useful after sensor damage.

Future work could explore dynamic architecture modification, multi-timescale adaptation, and larger morphologies such as quadrupeds or humanoid robots.

Conclusion

This exploration shows that architectural design choices strongly influence a system's ability to adapt to damage. Despite its minimalist parameterization, NCAP displays resilience that emerges from its biological constraints. The project reinforces the value of combining neuroscience-inspired structure with modern reinforcement learning.

This project was completed as part of Neuromatch Academy 2024. Special thanks to the NCAP paper authors for providing the foundation for this exploration.